Hardware Pioneers 2022: Secure and low bandwidth EdgeAI with OctaiPipe

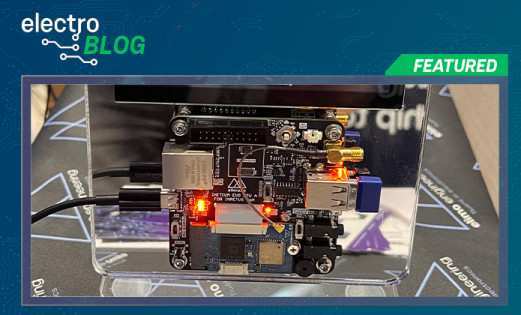

At Hardware Pioneers 2022, we stopped by OctaiPipe to chat about the future of EdgeAI, and how it addresses both the digital divide (the uneven distribution of internet access and speed worldwide) and growing data security risks.

OctaiPipe, created by T-Dab.ai, is a platform for creating and deploying EdgeAI using Federated Learning (sometimes known as Collaborative Learning), a technique that decentralizes AI model training. We chatted with Dr Eric Topham, CEO and Data Science director at T-Dab.ai, about the benefits of systems like this compared to traditional Machine Learning techniques.

Federated Learning for EdgeAI devices seems like a great way to approach several problems. Sending huge amounts of data from devices to the cloud isn't really an option outside of places with fast, high-bandwidth internet connections. Even leaving that point aside, security is a growing concern across the consumer and industrial device market, especially in the medical sector, and streaming potentially sensitive data to the cloud is a disaster waiting to happen.

This method gives a large number of distributed devices a very specific task - to train one instance of a model using local data. This fragment of a model is then streamed to the cloud in place of user data, where it is aggregated into a model which can be distributed across the whole device mesh.

Each part of the model is only a few kilobytes in size, and this is the same principle that allows AI platforms like Neuton and Edge Impulse to deploy such tiny models onto microcontrollers. The difference here is that the data doesn't ever hit the cloud. It's a compelling solution to some of the pressing issues facing distributed AI systems.

To find out more about the project, head to the T-Dab.ai website.

Leave your feedback...