Tinyml Fomo: Face Mask Detection System Using Edge Impulse

About the project

In this project, we will create our own Face Mask Detection system using the Edge Impulse's FOMO algorithm and deploy to OpenMV Cam H7 Plus.

Project info

Difficulty: Moderate

Platforms: Edge Impulse

Estimated time: 3 hours

License: GNU General Public License, version 3 or later (GPL3+)

Items used in this project

Story

Embedded devices often have limited memory, processing power, energy capacity, and more. Most embedded systems developers train their machine learning(ML) algorithms in the cloud like Edge Impulse or using high-performance computers. While most ML workloads run in the cloud, there’s now a trend for edge devices like Espressif ESP32, Raspberry Pi, Nvidia Jetson and etc. particularly there is a new technology called Tiny Machine Learning (TinyML), that has paved the way to meet the challenges of edge devices. This technology allows processing of the data locally on the device without the need to send it to the cloud. In addition, TinyML permits the inference of ML models, concerning DL models on the device as a Microcontroller that has limited resources.

In this project, I will show you how you can create a face mask detection system by training model using Edge Impulse platform and deploy it to your edge device like OpenMV H7 Plus. The newly launched ML technique by Edge Impulse researchers called FOMO will be leveraged here.

This project consists of three phases:

- Data Gathering and labeling

- Training the model using Edge Impulse

- Inference of Face Mask Detection system on the edge device

For this project, we’ll need the following components:

What You’ll Need for Face Mask Detection system- Development board officially supported by Edge Impulse platform. Here I have used the OpenMV H7 Plus.

As you may already know, Edge Impulse is the software as a service (SaaS) platform for developing intelligent devices using embedded machine learning (TinyML).

A new ML technique developed by researchers at Edge Impulse, a platform for creating ML models for the edge devices, makes it possible to run real-time object detection on devices with very small computation and memory capacity.

Source: https://www.edgeimpulse.com/blog/announcing-fomo-faster-objects-more-objects

Source: https://www.edgeimpulse.com/blog/announcing-fomo-faster-objects-more-objects

OpenMV Cam H7 PlusThe OpenMV H7 Plus camera is a compact, high resolution, low power computer vision development board. It differs from conventional cameras with a built-in microcontroller for on-the-fly image processing and control of external devices.

The OpenMV H7 Plus is a tiny camera module that only costs about $80 USD.

Using OpenMV H7 Plus, you can make your own video surveillance system with face recognition, digital vision for a robot, or a sorting system in production. And the camera can also act as a QR code reader or a line sensor.

OpenMV H7 Plus is programmed in MicroPython environment using OpenMV IDE.

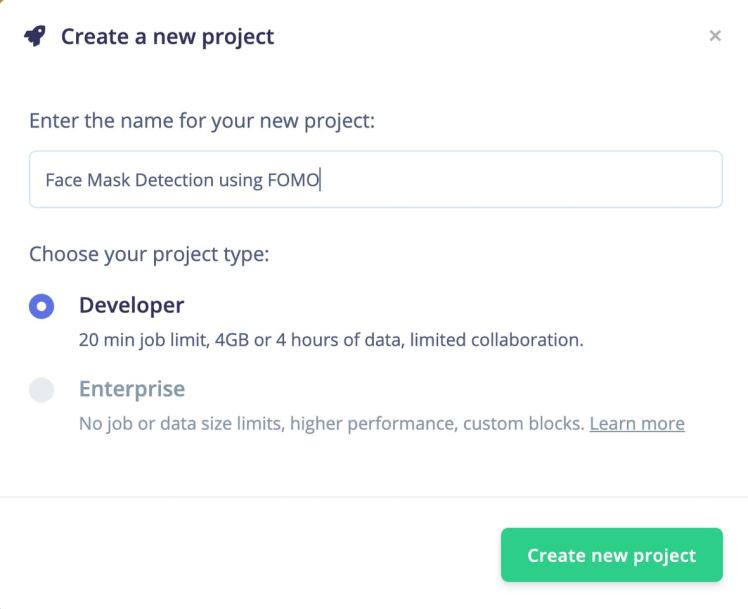

Face mask detection system projectGo to Edge Impulse plaform,enter your credentials at Login (or create an account), and start a new project.

- Select Images option.

- Then click Classify Multiple Objects option.

- Download a face mask dataset from Kaggle.

- Click Go to the uploader button.

- Upload images. Edge impulse have functionality of automatically split data between training and testing sets. In my case, I uploaded around 100 images in total.

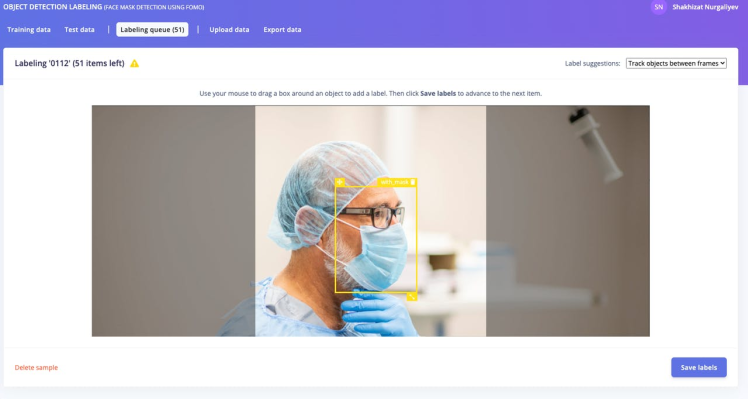

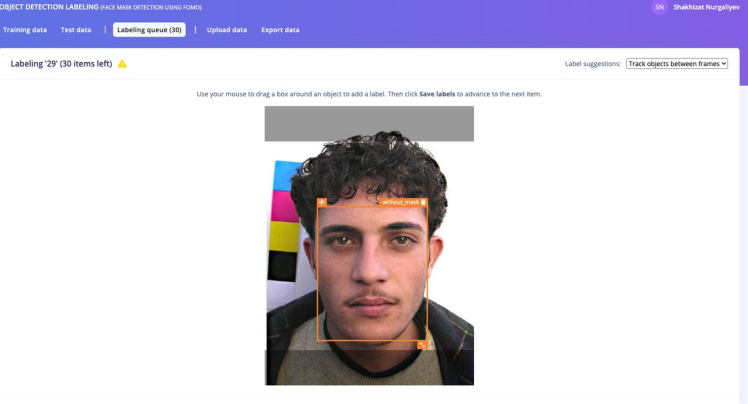

- For face mask detection, we need our bounding box to classify our classes. Therefore, we need to annotate the images using Edge Impulse annotation tool. As we want to train and test the image data set, we should split it to 80–20.

1 / 2

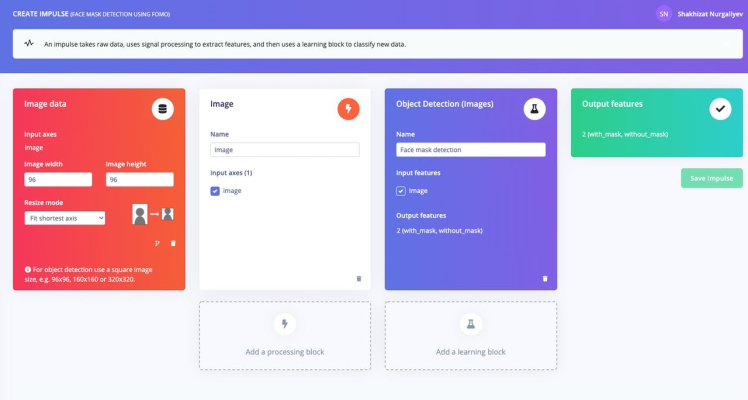

- Once you have your dataset ready, go to Create Impulse section.

- Rename it to Face mask detection.

- Click the Save Impulse button.

- Go to Image section.

- Select color depth as Grayscale. FOMO uses Grayscale features. Then press Save parameters.

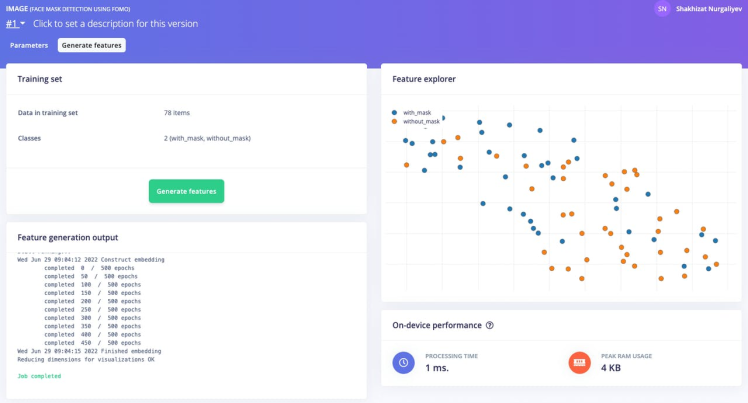

- Finally, click on the Generate features button. You should get a response that looks like the one below.

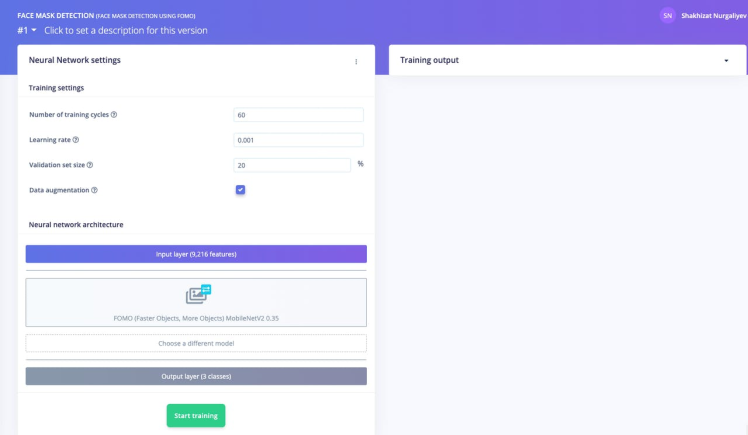

- Go to Face Mask Detection section. Leave hyper parameters set to default values.

Here, we used the default MobileNetV2 0.35. You can use different training model named as MobileNetV2 0.1. You can experiment with both options while building your model.

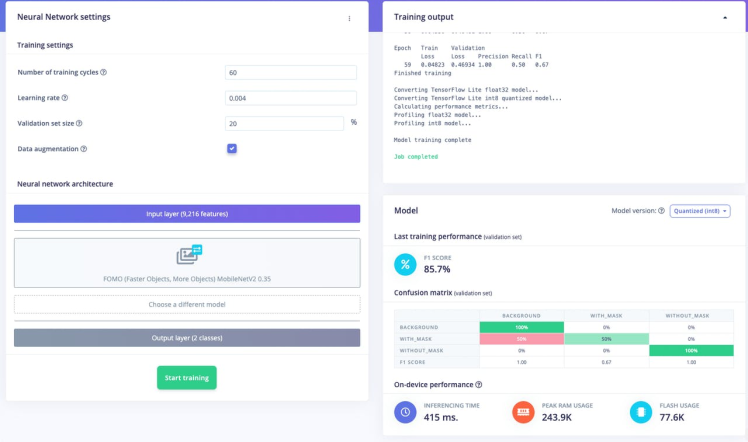

Train the model by pressing the Start training button. This process might take around 5-10 minutes, depends on your data set sizes. If everything goes correctly, you should see the following in the Edge Impulse

You should not get the 100% accuracy from your training dataset. In my humble opinion, anything greater than 70% is a great model performance.

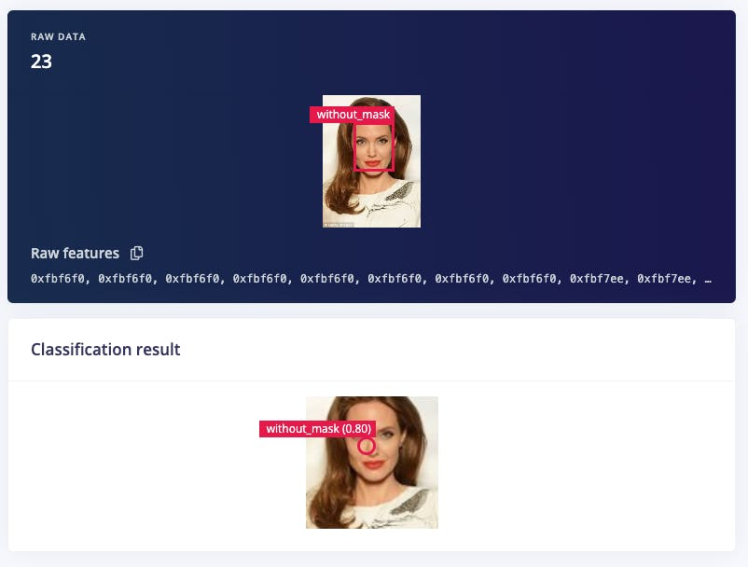

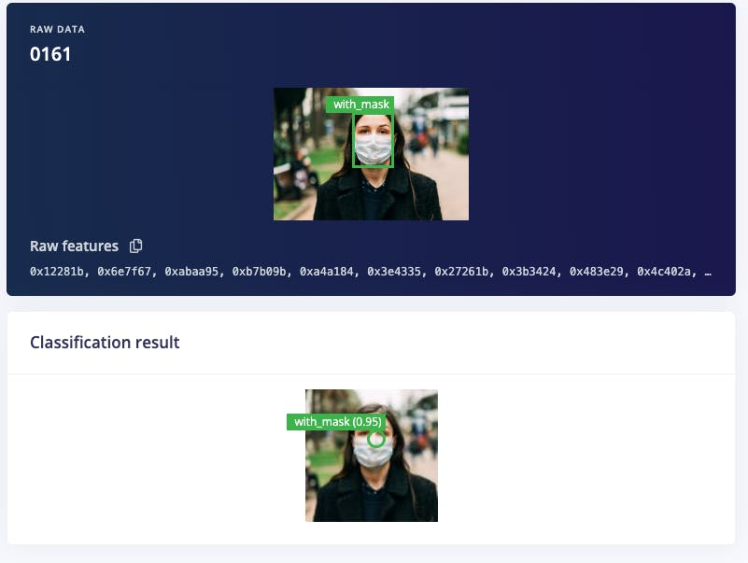

After the model’s training is completed, the next step is the run the Model testing in the Edge Impulse platform. Go to Live Classification section of the Edge Impulse.

After execution, you should see something like this:

1 / 2

Note that it probably won’t be very accurate we used a very small training set!

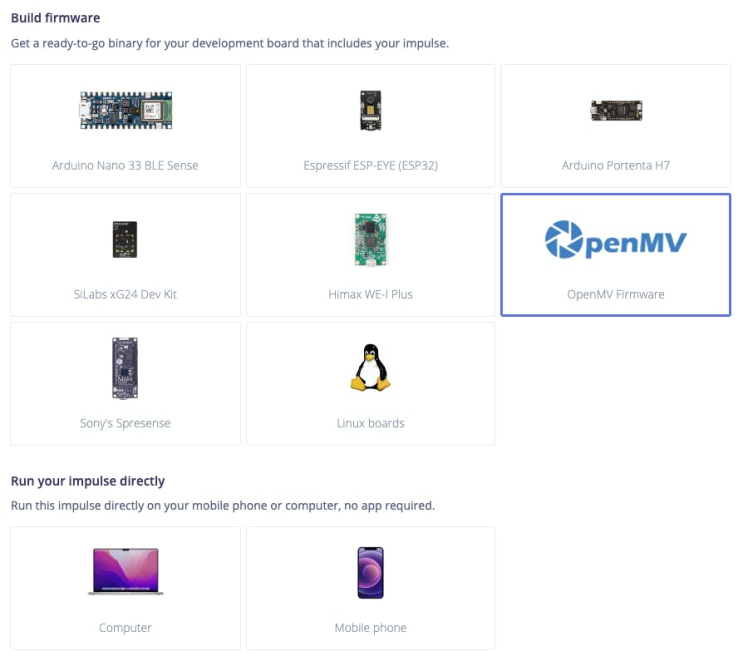

Once you are done building your model head over to the Deployment section and deployed it on one of the supported edge devices. ML model deployment is the process of putting a trained and tested ML model into a production environment like edge device, where it can be used for its intended purposes.

Go to the Deployment tab of the Edge Impulse. Click your edge devices type of firmware. In my case it is OpenMV Firmware.

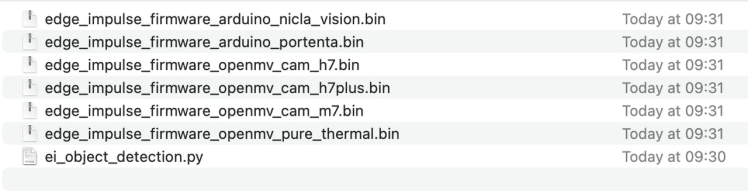

And at the bottom of the section, press the button Build. A zip file will be automatically downloaded to your computer. Unzip it.

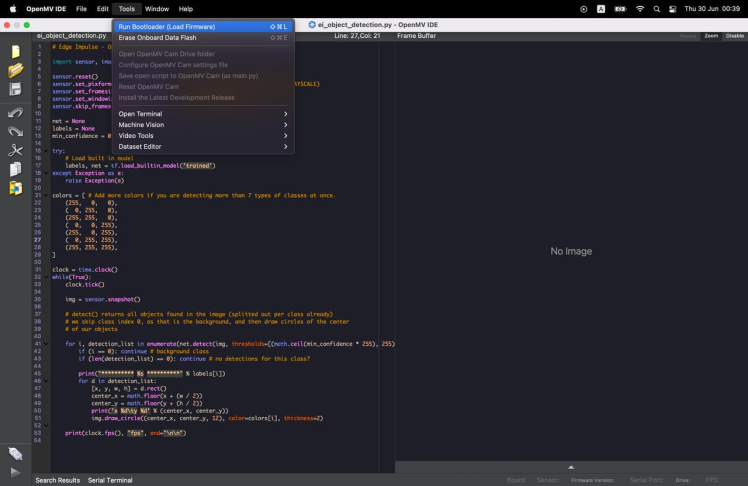

Open the python script ei_object_detection.py using Open OpenMV IDE.

Go to Tools in menu bar and click Run bootloader(Load Firmware).

This may take several minutes for load firmware onto your development board. Once this done, click the Play button in the bottom left corner. Then open serial monitor.

Here is a demonstration video of what the final result looks like.

In the Serial Monitor similar output must be shown like above. One key difference of the FOMO ML algorithm is that the detector outputs the anchor points of the centroids objects and not the bounding boxes.

Congratulations! You have trained your Edge Device to recognize your face mask using FOMO (Faster Objects, More Objects)ML algorithm offered by Edge Impulse. You can further expand this project! Go ahead!

References

Leave your feedback...