Cellular Automated Hydroponics System With Blues& Qubitro

About the project

The objective of this project is to develop an Automated Hydroponics system that integrates cellular IoT.

Project info

Difficulty: Difficult

Platforms: Adafruit, Arduino, DFRobot, Seeed Studio

Estimated time: 3 days

License: GNU General Public License, version 3 or later (GPL3+)

Items used in this project

Hardware components

View all

Story

An IoT-based hydroponics system is a smart approach to cultivating plants without soil. It relies on sensors and actuators connected to the internet to control water and nutrient delivery. Hydroponics, as an agricultural method, offers numerous advantages, including water conservation, space efficiency, resource optimization, increased crop yield and quality, and a reduction in pests and diseases. Through the use of IoT devices, farmers can remotely monitor and manage their hydroponic farms. They leverage data and analytics to fine-tune the growth environment and automate irrigation and nutrient distribution.

This project will show you how to build a Cellular Controlled Automated Hydroponics system with Blues and Qubitro.

Hardware Requirements ⚙️:

- LTE-M Notecard Global

- Notecarrier A with LiPo, Solar, and Qwiic Connectors

- Arduino Edge Control

- Lattepanda 3 Delta

- Xiao ESP32S3 Sense

- UniHiker

- DFRobot 8.9 Inch IPS Touch Screen Display

- DFRobot PH Sensor

- DFRobot Turbidity Sensor

- Grove Soil Moisture Sensor

- Dallas Water Temperature Sensor

- Water Flow Sensor

- Mini Water Pump

- Grove Light Sensor

- Grove Ultrasonic Sensor

Software Requirements ?️:

The Flow?:

Here is the flow diagram of my hydroponics system.

In this automated hydroponics system, I have employed three distinct controllers for the control system, with one dedicated to the user backend system.

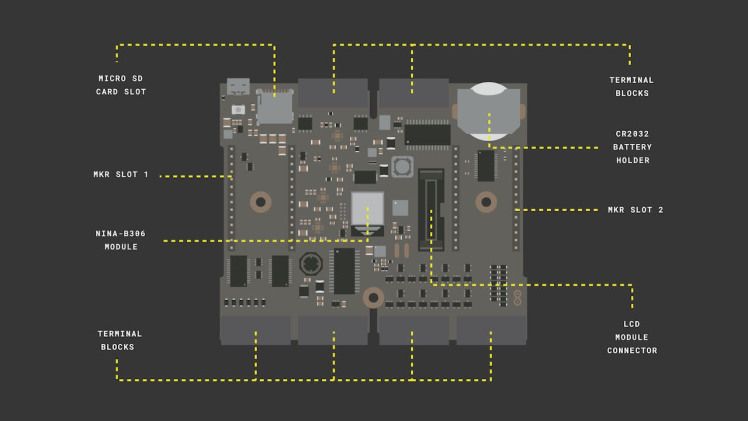

The first controller in use is the Arduino Edge Control hardware. The Edge Control device is designed for monitoring and managing various elements of outdoor environments, including agriculture, irrigation, weather conditions, and more.

It can collect sensor data and send it to the cloud or use artificial intelligence on the edge to make decisions. It can also control actuators like valves, relays, pumps, etc. The Edge Control has built-in Bluetooth and can be connected to other wireless networks by adding Arduino MKR boards. It is powered by a battery that can be recharged by a solar panel or a DC input.

In this hydroponics setup, all of my analog sensors, including those for soil moisture, pH levels, water turbidity, and the water pump, are connected to the Edge Control. For the continuous operation of the hydroponics system, a miniature water pump is required. To ensure an uninterrupted power supply, I have directly connected the water pump to the 5V output of the Edge Control. Consequently, all analog sensor readings are transmitted to the LattePanda 3 Delta via UART.

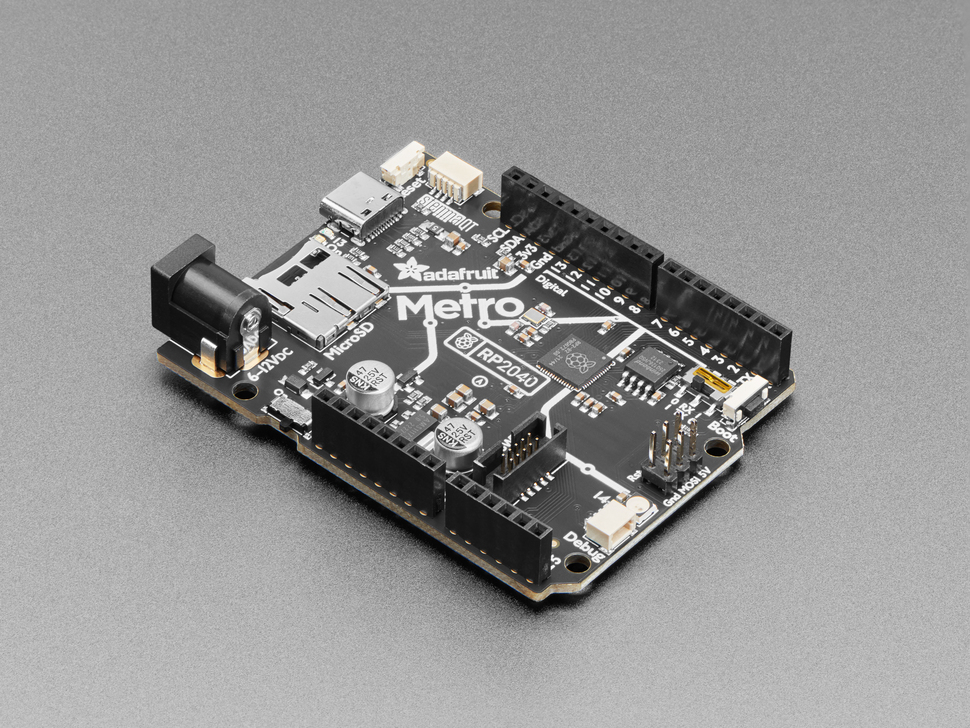

The next big brain of this hydroponics system is the LattePanda 3 Delta.

The LattePanda 3 Delta is a single-board computer powered by an Intel Celeron N5105 processor, featuring 8GB of RAM and 64GB of eMMC storage. It's a versatile platform capable of running Windows 10, Windows 11, or various Linux operating systems. Additionally, it boasts an integrated Arduino Leonardo co-processor, enabling seamless interaction with sensors and actuators. The LattePanda 3 Delta supports a range of connectivity options, including Wi-Fi 6, Bluetooth 5.2, Gigabit Ethernet, USB 3.2 Gen2, HDMI 2.0b, and DisplayPort.

It comes equipped with an inbuilt Arduino core, which facilitates the connection of the Edge Control to the LattePanda via the Arduino core's UART port. We'll interface digital sensors like the water flow meter and digital temperature sensor, as well as ultrasonic and light sensors, directly with the LattePanda.

All collected data will be locally displayed on the DFRobot's 8.9-inch IPS display, providing real-time insight into the system's status.

Then the LattePanda 3 Delta will collect all the sensor data and will transmit all the data to Blues Notehub via Cellular Notecard.

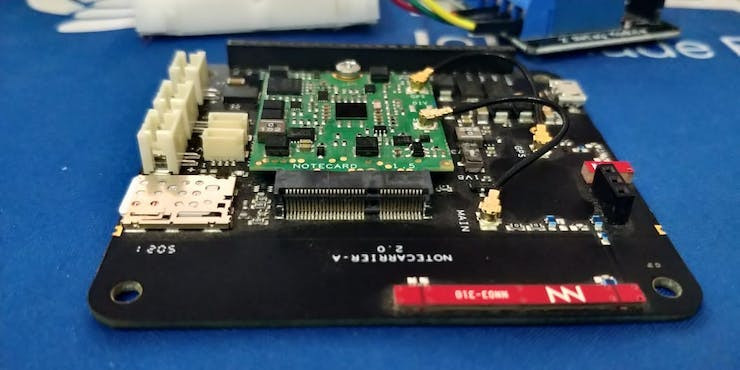

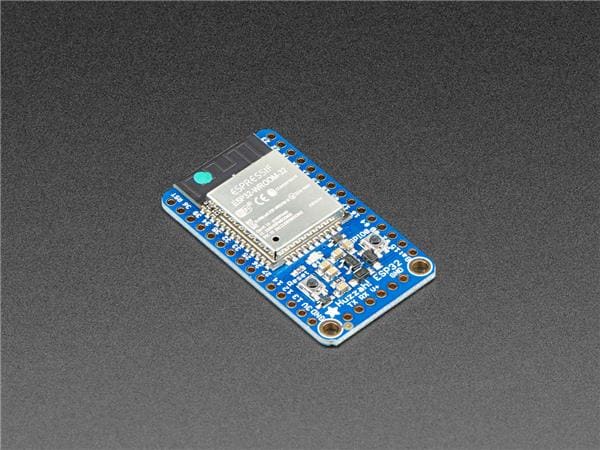

Next, we can use the Blues Notecard. The Blues Notecard is a compact, energy-efficient, and cost-effective cellular modem designed to connect any device to the internet via cellular networks. Accompanying it is the Blues Notecarrier, a carrier board that offers various power and connectivity options for the Notecard, including USB, battery, solar, and GPIO. Together, they constitute a comprehensive IoT solution, empowering developers to effortlessly and securely transmit data from sensors and devices to the cloud.

Utilizing Blues Notecard and Notecarrier offers numerous advantages:

- Broad Cellular Support: They are compatible with multiple cellular bands and regions, encompassing 2G, 3G, 4G LTE, and NB-IoT

- Built-in SIM Card: They come equipped with a built-in SIM card, tailored to the Blues Wireless network, offering cost-effective data plans.

- User-Friendly JSON API: These components feature a straightforward JSON API, simplifying data transmission and reception for developers with just a few lines of code.

- Secure and Scalable Cloud Platform: They integrate seamlessly with a secure and scalable cloud platform, responsible for storing and managing data from connected devices.

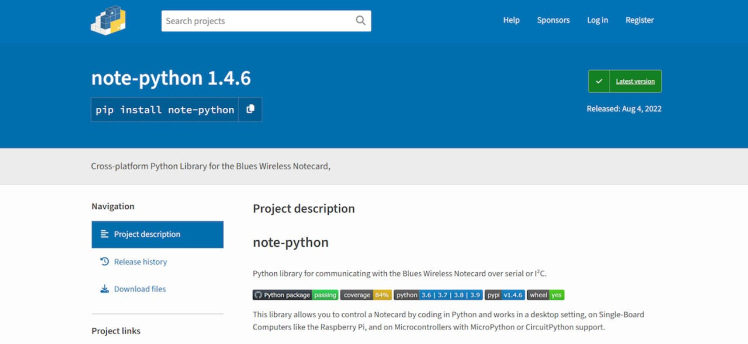

To establish connectivity, the Notecard can be linked to the LattePanda 3 Delta via a USB port. This enables the transmission of sensor data to the Blues Notehub through the Python client. Blues provides a note-pythonlibrary, streamlining communication with the Notehub via Python.

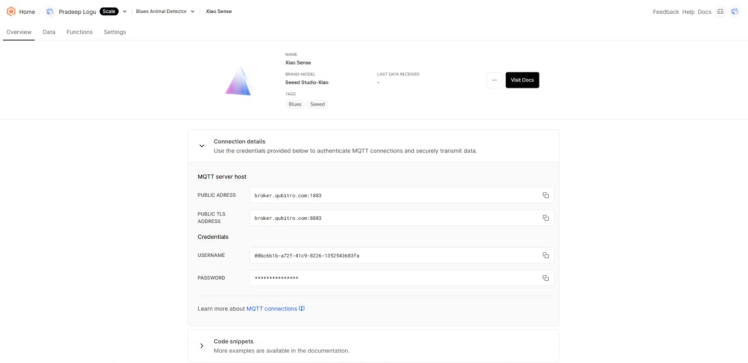

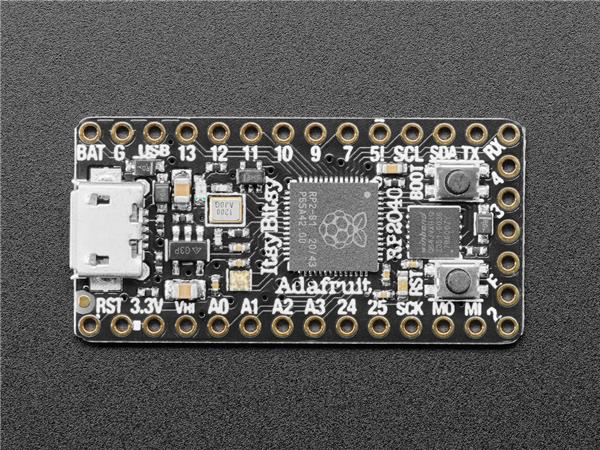

The next phase is to integrate a live camera that can capture plant growth and send image data via cellular IoT. For this process, I have used Xiao ESP32 Sense.

The Seeed Studio XIAO ESP32S3 Sense is a compact development board that seamlessly integrates embedded machine learning and photography capabilities. This versatile board features a camera sensor, a digital microphone, and an SD card slot, making it a powerful tool for various applications. It also offers support for Wi-Fi and Bluetooth wireless communication. By deploying a webcam script on the Xiao ESP32 S3 Sense and utilizing a Python script, we can effortlessly capture images and transmit them to the cloud via the Blues Notecard.

Now, let's tackle the next step - keeping an eye on the environment around our setup. It might seem like a bit of a headache, especially with all the sensors I've already hooked up. But guess what? I stumbled upon a pretty cool solution: the Thingy:91. It's got its own set of environmental sensors built right in. With the Thingy:91, I can send the environmental data over to the LattePanda 3 Delta, making the monitoring job simpler.

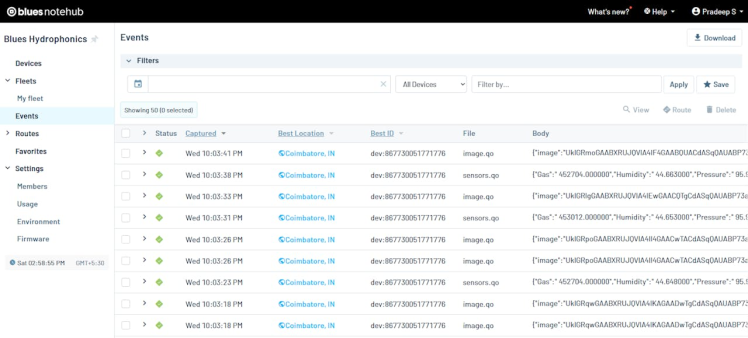

All the data from the Blues Notecard will be captured via Blues Notehub. Blues Notehub is a cloud service that helps you connect, manage, and analyze data from your Notecard devices. Notecard is a small, low-power, and low-cost cellular modem that can send data from any sensor or device to the internet.

Blues Notehub acts as a secure proxy that communicates with the Notecard devices and routes their data to your cloud application of choice. It also provides tooling for managing fleets of devices, performing over-the-air firmware updates, setting environment variables, and configuring alerts and actions. You can access Blues Notehub through a web-based dashboard or a RESTful API.

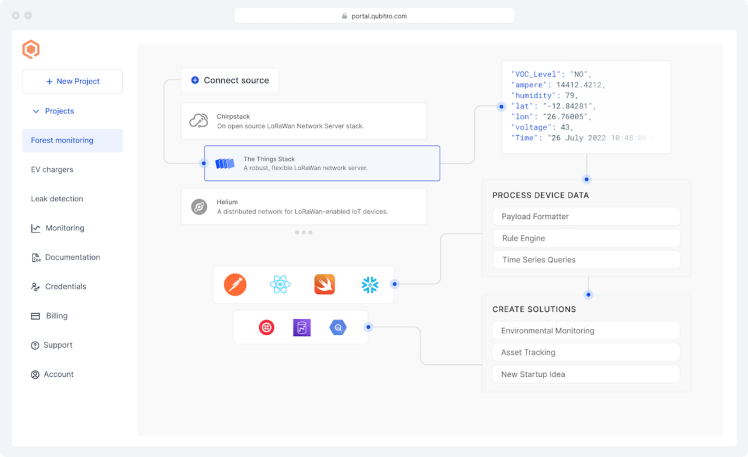

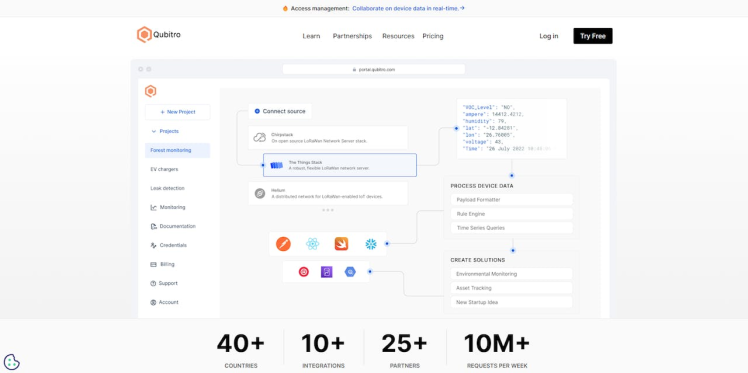

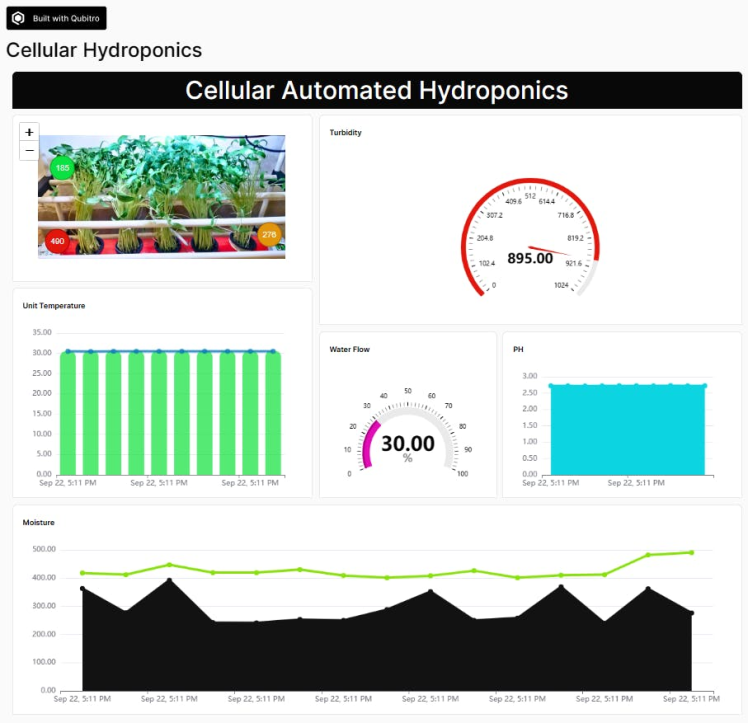

Finally, we will use the Qubitro cloud platform to visualize our hydroponics system’s data.

Qubitro is a platform that helps you connect, manage, and analyze data from your IoT devices. It is designed to make IoT development easy and accessible for everyone. Here are some features and benefits of Qubitro:

- Connect: You can connect any device to Qubitro using various protocols, such as MQTT, HTTP, or LoRaWAN. Qubitro supports multiple device types, such as Arduino, Raspberry Pi, ESP32, and more. You can also use Qubitro’s SDKs and APIs to integrate your devices with the platform.

- Manage: You can monitor and control your devices from a web-based dashboard. You can see your devices' status, location, and activity in real-time. You can also configure rules, alerts, and actions for your devices based on their data.

- Analyze: You can store and process your device data on Qubitro’s cloud platform. You can use Qubitro’s built-in tools or third-party services to visualize, query, and analyze your data. You can also apply machine learning and artificial intelligence to your data to gain insights and predictions.

Qubitro is used by companies of all sizes and industries, such as smart agriculture, smart city, smart home, smart health, and more. Qubitro aims to democratize IoT development and make it possible for anyone to create IoT applications.

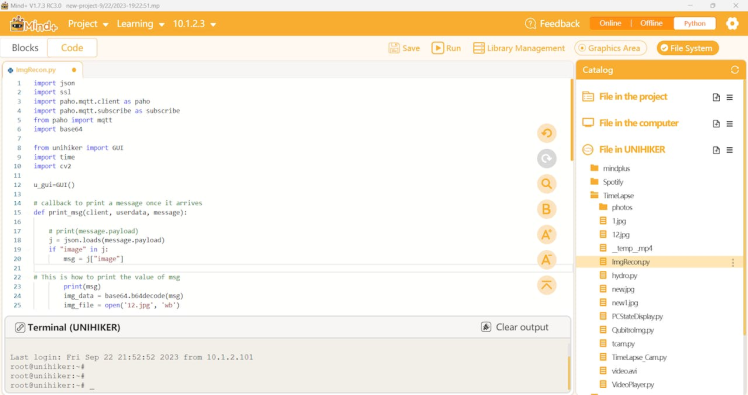

The final part is constructing an image from the Qubitro’s payload. For that, I have used UNIHIKER.

UNIHIKER is a tiny development board that combines embedded machine learning and photography capabilities. It has a camera sensor, a digital microphone, and an SD card slot. It also supports Wi-Fi and Bluetooth wireless communication. You can program UNIHIKER using a browser or various IDEs. You can also control UNIHIKER’s built-in sensors and hundreds of connected sensors and actuators using Python. UNIHIKER has a built-in SIoT service that allows you to store and access data on the device itself. UNIHIKER offers an innovative development experience for learning, coding, and creating.

By using this UNIHIKER, we can capture the live payload from Qubitro using Python and then we can construct the image data.

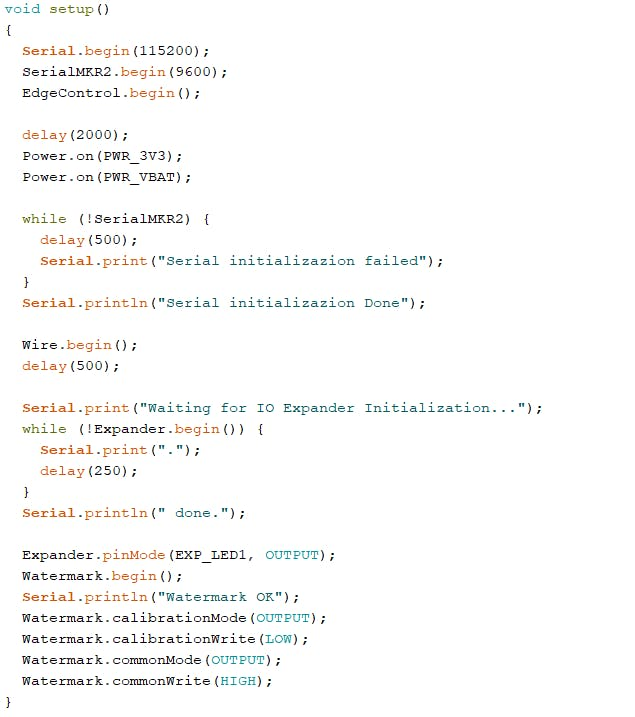

Step 1️⃣- Arduino Edge Control Setup:

Edge Control offers a range of features, including analog inputs and latch I/Os. Additionally, it provides relay outputs. Currently, we won't be utilizing latching units, such as valves and solid-state relays. However, it's worth noting that as the project scales up in the future, you might require the ability to control valves and solid-state relays

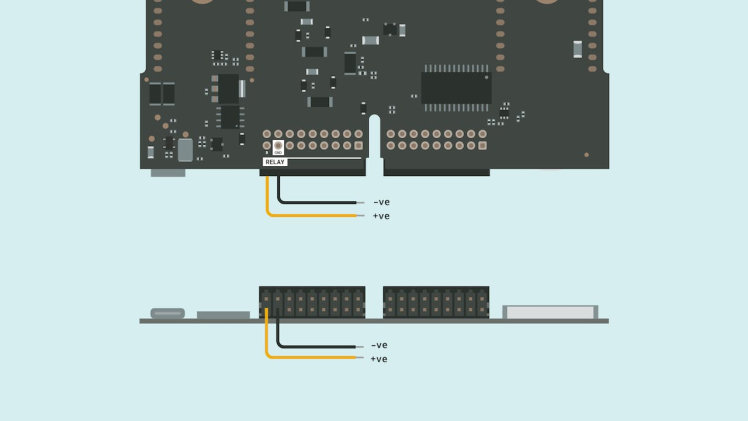

I have successfully integrated Edge Control into my automated hydroponics system. In this setup, a continuous-running motor circulates water throughout the system. Consequently, there's no need to incorporate additional valves or relays. Instead, I directly connected a mini water pump to the 5V supply of the Edge Control unit. To power the Edge Control, a 12V DC power supply is required.

You can directly connect the solar panel or DC power supply to the +ve and the -ve terminals of the Edge Control.

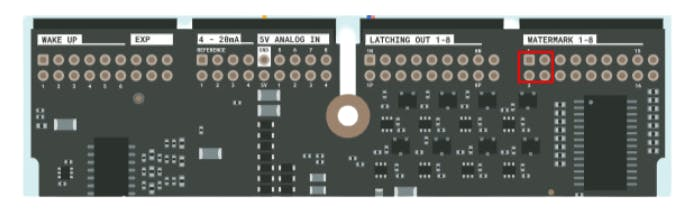

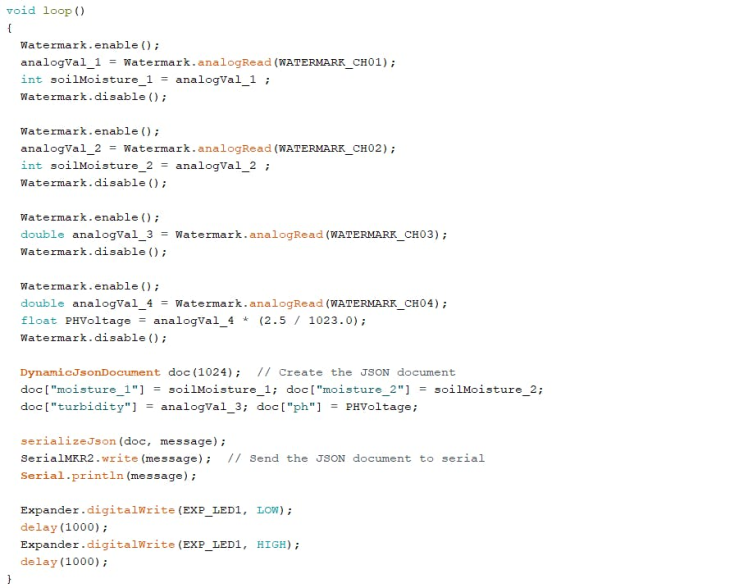

Then I used 4 (1, 2, 3 and 4) watermark inputs to connect my soil moisture, turbidity, and PH sensor.

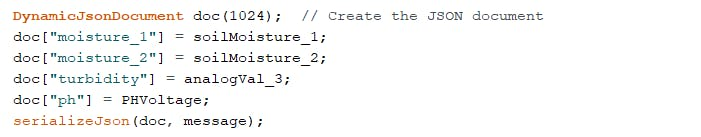

Once we have collected all 4-sensor data, we can construct a JSON with that.

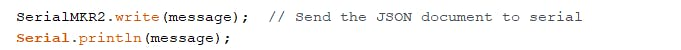

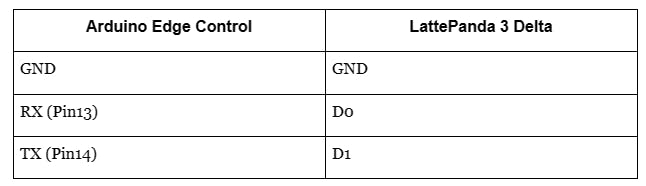

Then we can transfer the data to the LattePanda 3 Delta via the serial port. For that, we are going to utilize one of the MKR (MKR2) slots in Edge Control.

Once we enable the MKR2 slot we can transmit the data via that MKR2’s UART terminal.

The MKR2 to LattePanda 3 Delta connection.

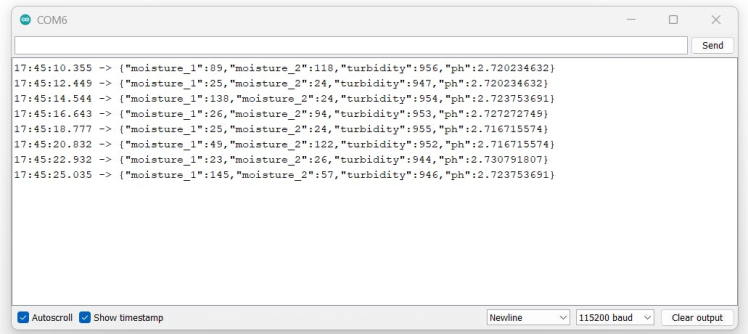

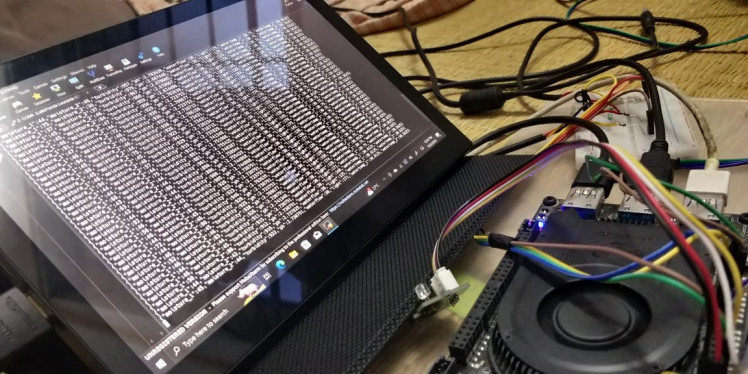

Here is the final Arduino IDE serial monitor output.

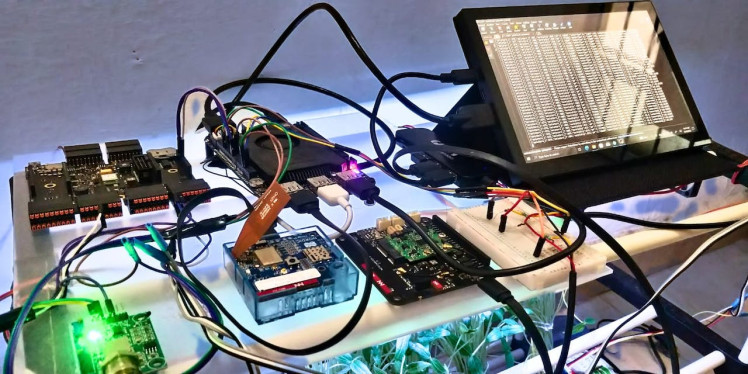

Step 2️⃣- LattePanda 3 Delta Arduino Setup:

The LattePanda 3 Delta is a powerful single-board computer, capable of running Windows 10 while featuring an internal Arduino core. This Arduino core is built upon the Arduino Leonardo ATMEGA32U4 microcontroller, offering users the ability to program and manage an array of sensors and actuators using the familiar Arduino IDE. Notably, the LattePanda 3 Delta includes a dedicated pin header designed to seamlessly accommodate most Arduino shields and modules. Users can conveniently interface with the Arduino core via the USB or serial port of the LattePanda 3 Delta.

Considering that Edge Control exclusively offers analog input units, we have chosen to leverage the capabilities of the LattePanda 3 Delta to connect our digital sensors. These sensors include the Dallas waterproof temperature sensor, water flow sensor, light sensor, and ultrasonic sensors.

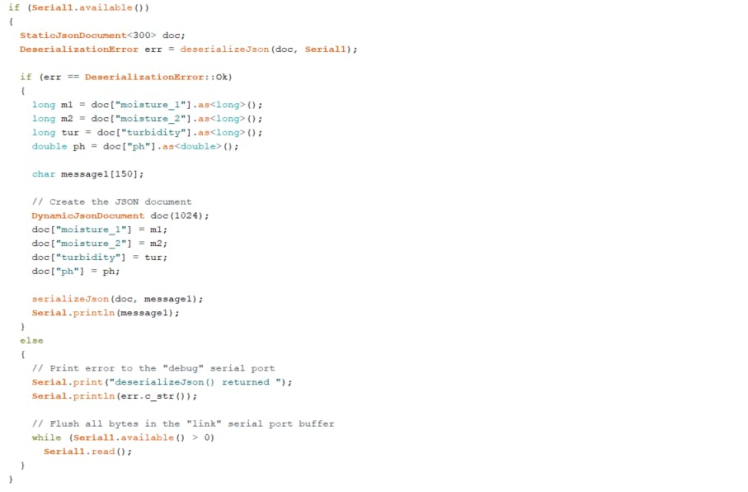

Since we have already connected our Edge Controller to the LatteaPanda3 Delta we need to decode the Edge Control data. Use the Arduino snippet below to decode the input JSON.

Once we decode the input JSON, we need to connect the water flow sensors to digital pin7, the Light sensor to analog pin a0, and the Dallas temperature sensors to digital pins 6 and 7 of the LattePanda 3 Delta. Connect all the power pins to +5 and GND pins to the GND of the LattePanda 3 delta.

Here is the complete Arduino sketch.

#include <ArduinoJson.h>

#include <OneWire.h>

#include <DallasTemperature.h>

#define ONE_WIRE_BUS 6

OneWire oneWire(ONE_WIRE_BUS);

DallasTemperature Dsensors(&oneWire);

#define ONE_WIRE_BUS1 5

OneWire oneWire1(ONE_WIRE_BUS1);

DallasTemperature Dsensors1(&oneWire1);

#define SENSOR1 7

int sensorPin = A0;

int sensorValue = 0;

long currentMillis1 = 0;

long previousMillis1 = 0;

int interval = 1000;

float calibrationFactor = 4.5;

volatile byte pulseCount1;

byte pulse1Sec1 = 0;

float flowRate1;

unsigned int flowMilliLitres1;

unsigned long totalMilliLitres1;

unsigned long next = 0;

void pulseCounter1()

{

pulseCount1++;

}

void setup() {

// Initialize "debug" serial port

Serial.begin(115200);

Serial1.begin(9600);

Dsensors.begin(); // Start up the library

Dsensors1.begin(); // Start up the library

pinMode(13, OUTPUT);

pinMode(SENSOR1, INPUT_PULLUP);

pulseCount1 = 0;

flowRate1 = 0.0;

flowMilliLitres1 = 0;

totalMilliLitres1 = 0;

previousMillis1 = 0;

attachInterrupt(digitalPinToInterrupt(SENSOR1), pulseCounter1, FALLING);

}

void loop() {

// Check if the other Arduino is transmitting

digitalWrite(13, LOW); // turn the LED off by making the voltage LOW

if (Serial1.available())

{

StaticJsonDocument<300> doc;

DeserializationError err = deserializeJson(doc, Serial1);

if (err == DeserializationError::Ok)

{

long m1 = doc["moisture_1"].as<long>();

long m2 = doc["moisture_2"].as<long>();

long tur = doc["turbidity"].as<long>();

double ph = doc["ph"].as<double>();

currentMillis1 = millis();

if (currentMillis1 - previousMillis1 > interval) {

pulse1Sec1 = pulseCount1;

pulseCount1 = 0;

flowRate1 = ((1000.0 / (millis() - previousMillis1)) * pulse1Sec1) / calibrationFactor;

previousMillis1 = millis();

}

Dsensors.requestTemperatures();

Dsensors1.requestTemperatures();

double Dallas1 = Dsensors.getTempCByIndex(0);

double Dallas2 = Dsensors1.getTempCByIndex(0);

sensorValue = analogRead(sensorPin);

digitalWrite(13, HIGH);

delay(500);

char message1[150];

// Create the JSON document

DynamicJsonDocument doc(1024);

doc["moisture_1"] = m1;

doc["moisture_2"] = m2;

doc["turbidity"] = tur;

doc["ph"] = ph;

doc["waterflow"] = flowRate1;

doc["light"] = sensorValue;

doc["watertemp_1"] = Dallas1;

doc["watertemp_2"] = Dallas2;

serializeJson(doc, message1);

Serial.println(message1);

}

else

{

// Print error to the "debug" serial port

Serial.print("deserializeJson() returned ");

Serial.println(err.c_str());

// Flush all bytes in the "link" serial port buffer

while (Serial1.available() > 0)

Serial1.read();

}

}

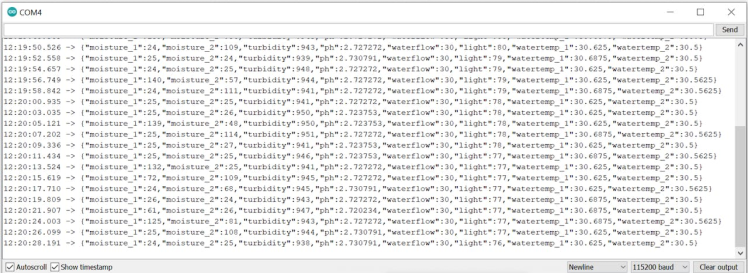

}And here is the final serial monitor result which collects all the data from the Edge Controller and the sensors. Then it will print it in a serial console.

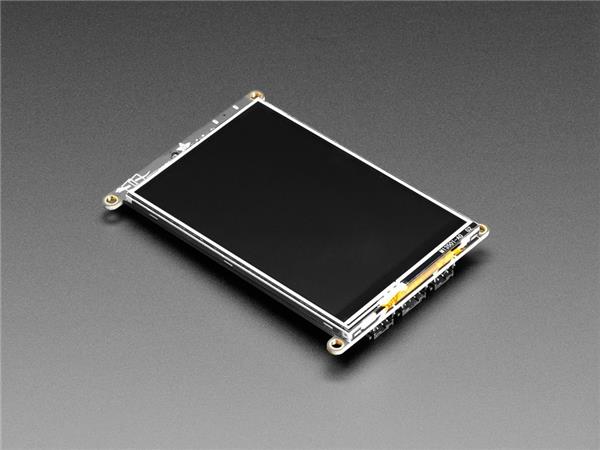

In order to monitor the data locally, I have used a DFRobots 8.5-inch IPS Touch Screen Display. To use this display, you need to connect it via the HDMI port.

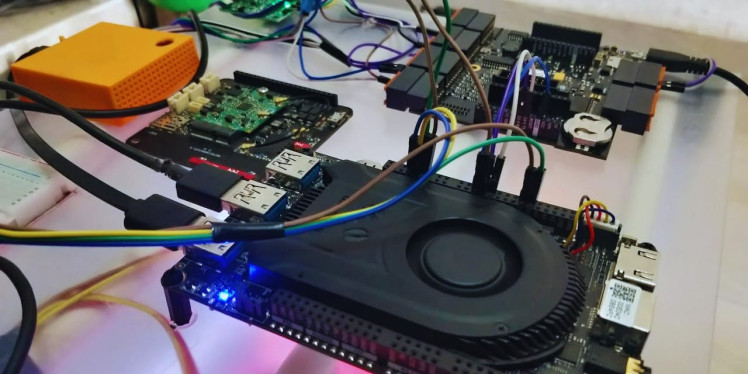

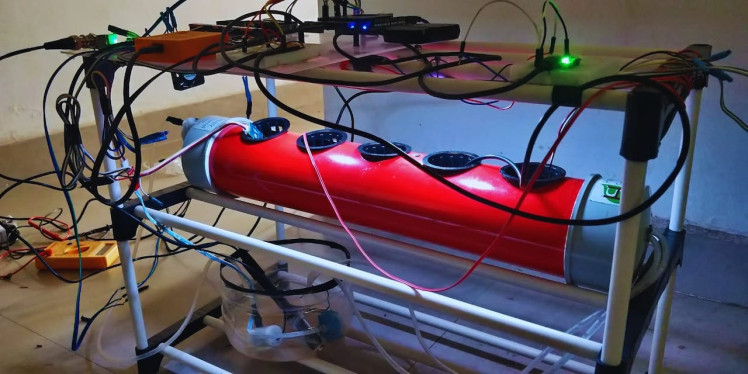

The final hardware setup before the cultivation:

After Cultivation:

Step 3️⃣- Thingy:91 Setup:

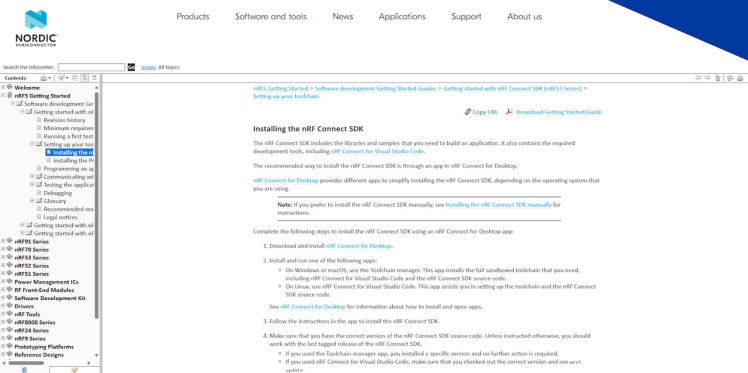

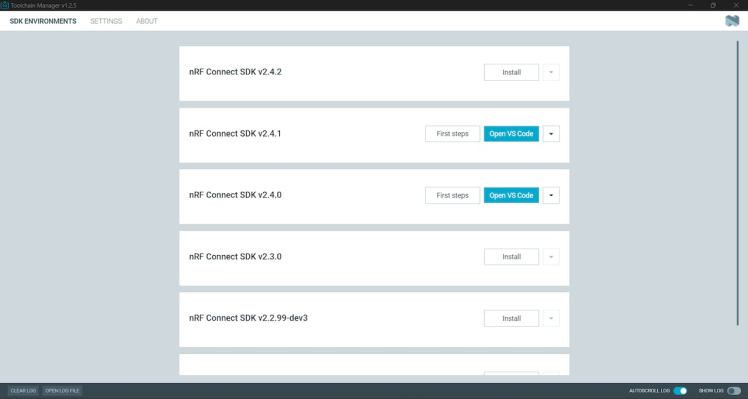

Thingy:91 is one of the best products from Nordic Semiconductor. It features internal environmental sensors. We are going to use that feature to monitor our control systems via this Thingy:91. First, install the nRF development environment with VS Code. Follow the instructions and set up the environment.

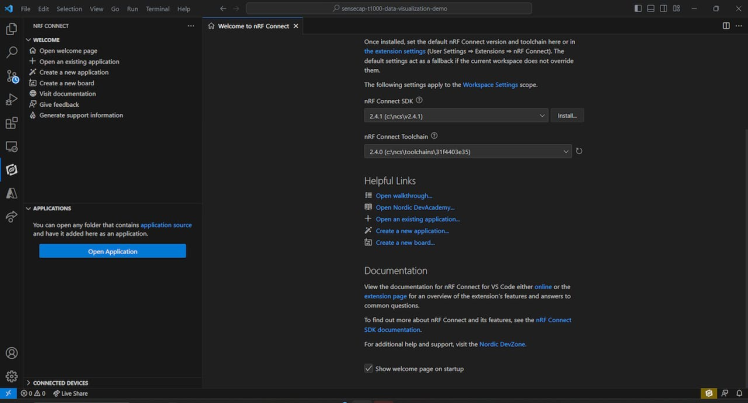

Once you are done with the installation, open the VS Code via nRF connect.

Then click on Create New Application and open the BME860 sample Zephyr.

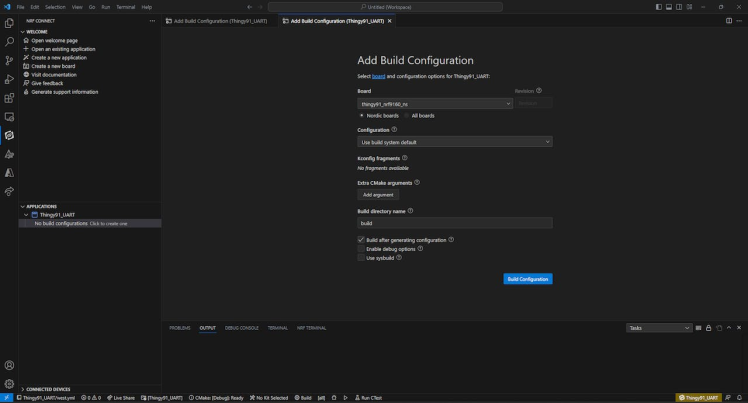

Next, build the project for Thingy:91.

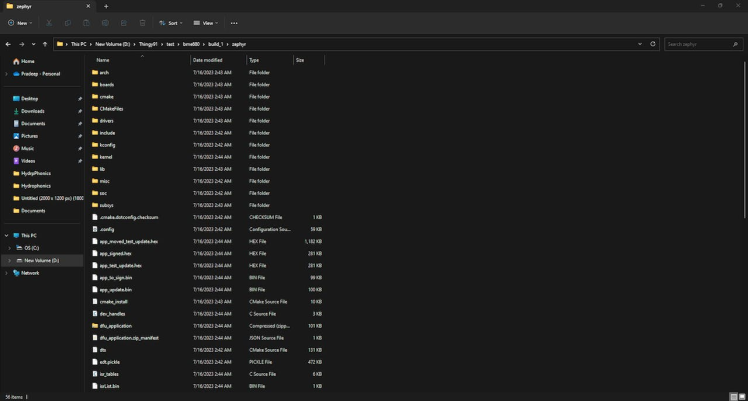

Once you finish the build, navigate to the project root folder and navigate to the zephyr folder, then copy the app_signed.hex file.

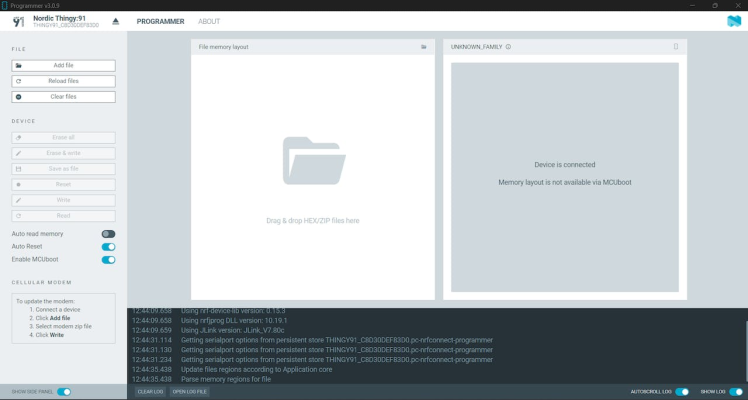

Next, open the nRF programmer and connect the Thingty:91(Press the boot button while connecting)

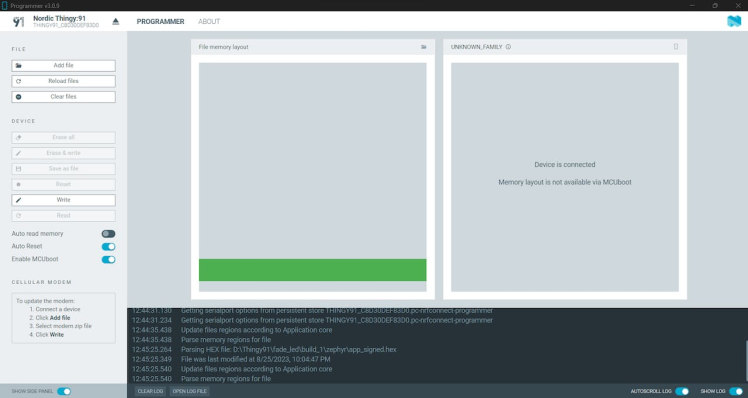

Then, add the hex file that we copied from the build.

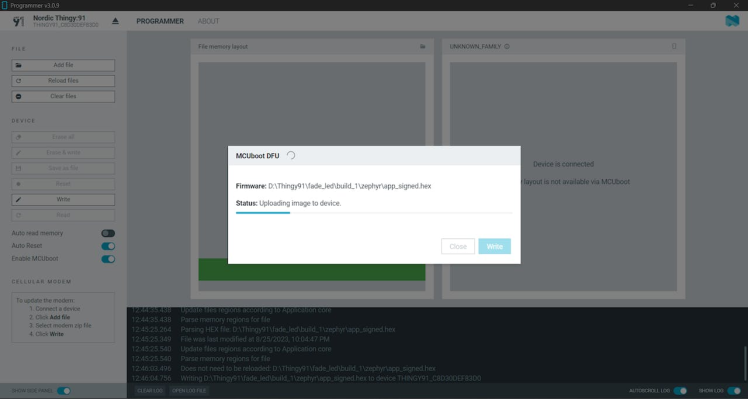

Next, click on “Write”,

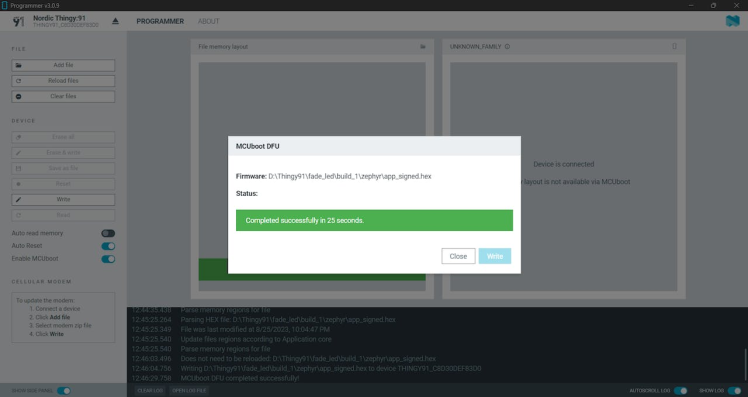

and wait for the writing to finish.

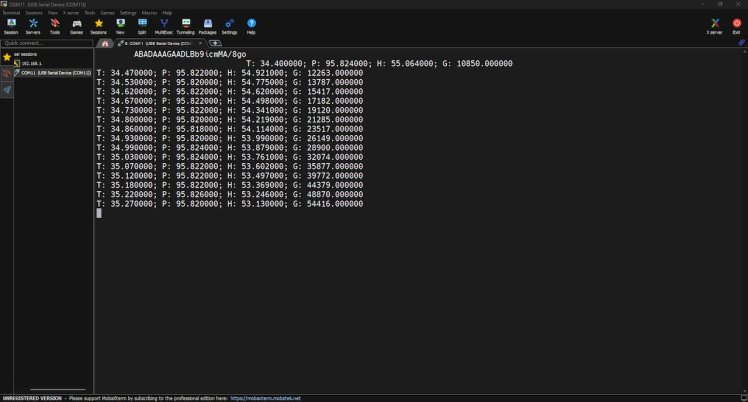

Next, open any serial monitor software and select the Thingy:91 device with a baud rate of 115200.

Now we should have all our Thingy:91’s environmental data. We can decode this data via Python. Note: You can view the hex file in the GitHub repo.

Step 4️⃣- Xiao ESP32 S3 Sense Setup:

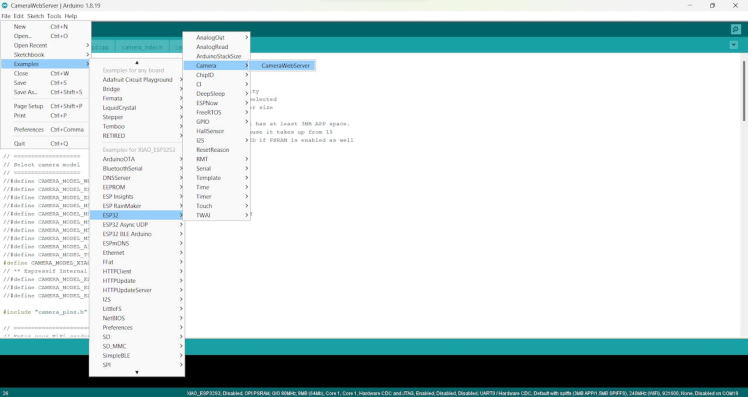

We are going to use the Xiao ESP32 S3 Sense to capture live images from our hydroponics system over Wi-Fi and then they will be saved in the LattePanda 3 Delta using Python script. You can refer to the following documentation to learn more about the Xiao ESP32S3 Sense.

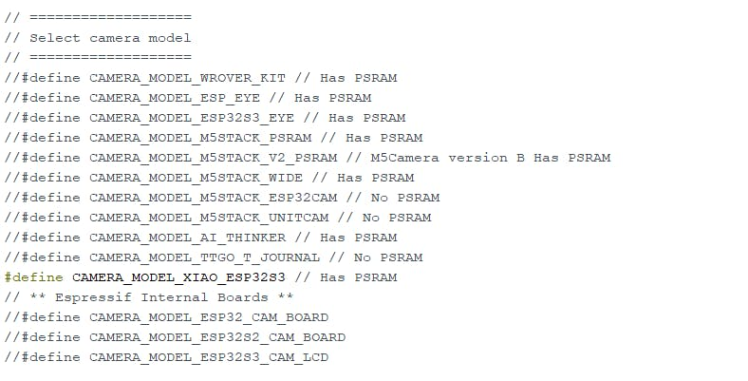

Below is the sketch that helps you to transmit the live video footage via the local Wi-Fi network. Navigate to the Xiao ESP32 S3’s default examples and open the Camerawebserver example code.

Select the camera model as Xiao ESP32 S3.

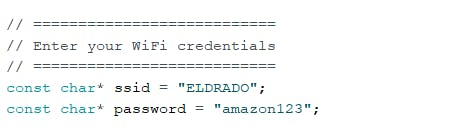

Change the WiFi credentials. Next, upload the code to the Xiao ESP32 S3 Sense.

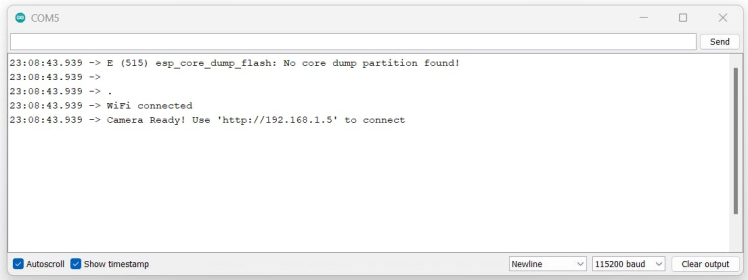

Look for the serial monitor output. It will show you the local IP address.

Open that IP address in the browser. You should see the following control panel.

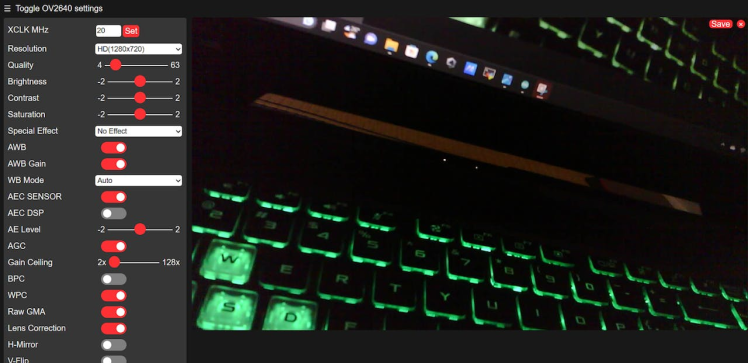

Set the desired resolution and then stop the streaming. Add the following port [:81/stream ] in the camera web server URL. It will directly show you the live feed without the control panel.

Now we can use opencv to capture and save the image in LattePanda 3 Delta.

Step 5️⃣- Python Client Setup:

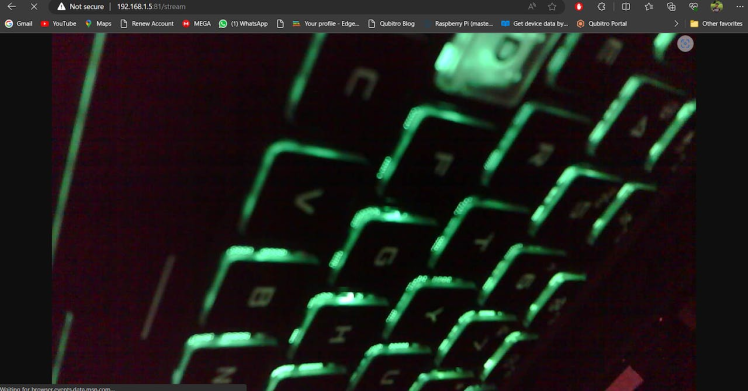

In this Python script, we have four main parts. Part 1 will capture the serial monitor results from the LattePanda’s Arduino core which already has the Edge Controle’s data. For that, we are going to use the `pyserial` library.

Part 2 captures the Thingy:91 live environmental data via the serial port. Part 3 captures and saves the image data from Xiao ESP32 S3 Sense. And Part 4 transmits all of the data to Blues Notehub via the Blues Notecard.

Let’s see each part individually:

Part 1: LattePanda Arduino Core to PySerial.

We have used LattePanda 3 Delta’s Arduino Core 2 to read our sensor data. Now we need to capture the serial port data and transfer it to Notehub. To do that, we can use this Python code snippet to get and decode the data.

lp_serial = serial.Serial('COM4', 115200, timeout=1)

line = lp_serial.readline().decode('utf-8').rstrip()

json_without_slash = json.loads(line)

print("Serial Data is " + str(json_without_slash))lp_serial = serial.Serial('COM4', 115200, timeout=1)

line = lp_serial.readline().decode('utf-8').rstrip()

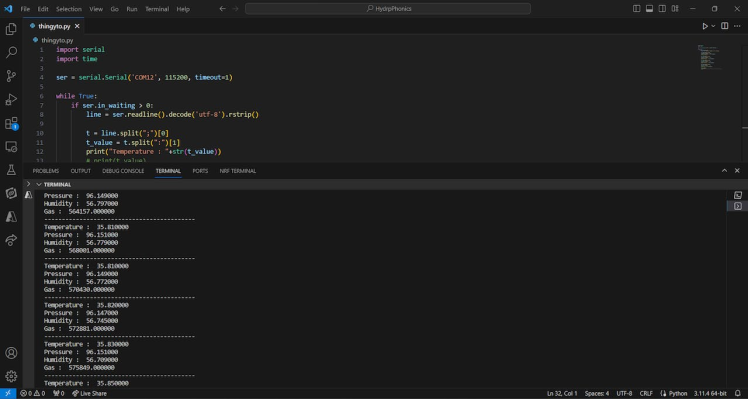

json_without_slash = json.loads(line)Part 2: Thingy:91 data to PySerial

Like the previous section, we will capture the serial port data and construct the JSON. Then add it to the payload.

import serial

import time

ser = serial.Serial('COM12', 115200, timeout=1)

while True:

if ser.in_waiting > 0:

line = ser.readline().decode('utf-8').rstrip()

t = line.split(";")[0]

t_value = t.split(":")[1]

print("Temperature : "+str(t_value))

# print(t_value)

p = line.split(";")[1]

p_value = p.split(":")[1]

# print(p_value)

print("Pressure : "+str(p_value))

h = line.split(";")[2]

h_value = h.split(":")[1]

# print(h_value)

print("Humidity : "+str(h_value))

g = line.split(";")[3]

g_value = g.split(":")[1]

# print(g_value)

print("Gas : "+str(g_value))

time.sleep(1)

print("-------------------------------------------")Here are the terminal results from the Python script.

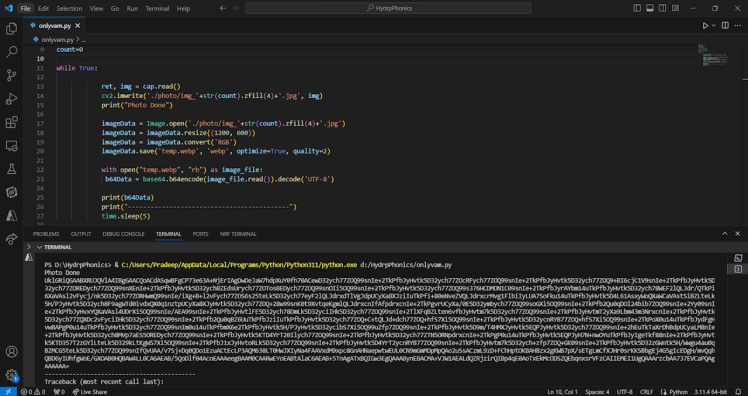

Part 3: Xiao ESP32 S3 Sense Image to Base64.

In this part, we are going to capture the live video feed from the Xiao Esp32 S3 Sense. Using opencv we are going to capture and save the image then the captured image will be converted into a Base64 string via Python.

import time

import cv2

import numpy as np

import base64

from PIL import Image

cap = cv2.VideoCapture('http://192.168.1.9:81/stream')

count=0

while True:

ret, img = cap.read()

cv2.imwrite('./photo/img_'+str(count).zfill(4)+'.jpg', img)

print("Photo Done")

imageData = Image.open('./photo/img_'+str(count).zfill(4)+'.jpg')

imageData = imageData.resize((1200, 600))

imageData = imageData.convert('RGB')

imageData.save('temp.webp', 'webp', optimize=True, quality=2)

with open("temp.webp", "rb") as image_file:

b64Data = base64.b64encode(image_file.read()).decode('UTF-8')

print(b64Data)

print("-------------------------------------------")

time. Sleep(5)Here is the terminal result from the Python script.

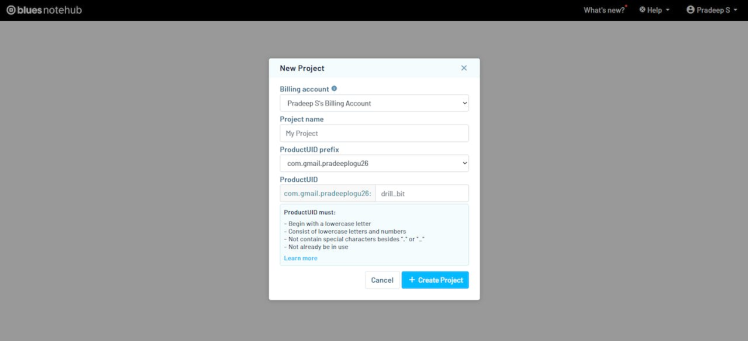

Note: Before going to part 4, we need to create a new project with Blues Notehub.

Navigate to the Blues Notehub and create a new project. Then copy the product UID, which we will use in Part 4,

Part 4: Python to Blues Notehub communication.

In this final part, all the captured data will be compressed into JSON data and those JSONs will be forwarded to the Blues Notehub. Change the product UID that you copied from the Notehub.

productUID = "-----------------------------------------------"Note: Before sending the base64 image data to Notehub, check your binary data size. There is also a large payload sending APIs available in Blues Notecard. You can directly pass the binary payloads to your specified route engines.

Here is a simple Arduino sketch to transfer the binary payload.

char buff[25] = "Hello World";

Notecard::binaryTransmit((uint8_t *) buff, 10, sizeof(buff), false);

J *req = NoteNewRequest("web.post");

JAddStringToObject(req, "route", "MyRoute");

JAddBoolToObject(req, "binary", true);

NoteRequest(req);Follow this guide to learn how to send large binary payloads to Notehub. Sending and Receiving Large Binary Objects - Blues Developers

In my case, my payload size is limited, so I can work directly with my Python script to send the binary payload.

Run the complete Python script.

import notecard

import serial

import time

import cv2

import numpy as np

import base64

import serial

import notecard

import json

from PIL import Image

cap = cv2.VideoCapture(‘http://192.168.1.9:81/stream’)

count=0

productUID = "-------------------------"

if __name__ == '__main__':

lp_serial = serial.Serial('COM4', 115200, timeout=1)

thingy_serial = serial.Serial('COM10', 115200, timeout=1)

thingy_serial.flush()

note_serial = serial.Serial('COM7', 9600)

card = notecard.OpenSerial(note_serial, debug=True)

while True:

if thingy_serial.in_waiting > 0:

if thingy_serial.in_waiting > 0:

line = thingy_serial.readline().decode('utf-8').rstrip()

t = line.split(";")[0]

t_value = t.split(":")[1]

print("Temperature : "+str(t_value))

# print(t_value)

p = line.split(";")[1]

p_value = p.split(":")[1]

# print(p_value)

print("Pressure : "+str(p_value))

h = line.split(";")[2]

h_value = h.split(":")[1]

# print(h_value)

print("Humidity : "+str(h_value))

g = line.split(";")[3]

g_value = g.split(":")[1]

# print(g_value)

print("Gas : "+str(g_value))

Thingy_env= {"Temperature": t_value, "Humidity": h_value, "Pressure": p_value, "Gas":g_value }

line = lp_serial.readline().decode().rstrip()

# line = lp_serial.readline().decode('utf-8').rstrip()

json_without_slash = json.loads(line)

print("Serial Data is " + str(json_without_slash))

count=count+1

obj1 = json.dumps(Thingy_env)

obj2 = json.dumps(json_without_slash)

# Parse the JSON objects into Python dictionaries

dict1 = json.loads(obj1)

dict2 = json.loads(obj2)

# Merge the two dictionaries using the dictionary unpacking operator **

merged_dict = {**dict1, **dict2}

# # Print the merged dictionary

# print(merged_dict)

req = {"req": "hub.set"}

req["product"] = productUID

req["mode"] = "continuous"

card.Transaction(req)

time.sleep(5)

req = {"req": "note.add"}

req["file"] = "sensors.qo"

req["start"] = True

req["body"] = merged_dict

# print("Req is : " + str(req))

card.Transaction(req)

# capture

ret, img = cap.read()

# save file

cv2.imwrite('./photo/img_'+str(count).zfill(4)+'.jpg', img)

print("Photo Done")

imageData = Image.open('./photo/img_'+str(count).zfill(4)+'.jpg')

# imageData = Image.open('2.jpeg')

imageData = imageData.resize((400, 320))

imageData = imageData.convert('RGB')

imageData.save('temp.webp', 'webp', optimize=True, quality=2)

# convert b64

with open("temp.webp", "rb") as image_file:

b64Data = base64.b64encode(image_file.read()).decode('UTF-8')

# # # send a note.add with the image as the body

request = {'req': 'note.add',

'file': 'image.qo',

'sync': True,

'body': {

'image':b64Data

}}

# card.Transaction(request)

result = card.Transaction(request)

print(result)

print("-------------------------------------------")

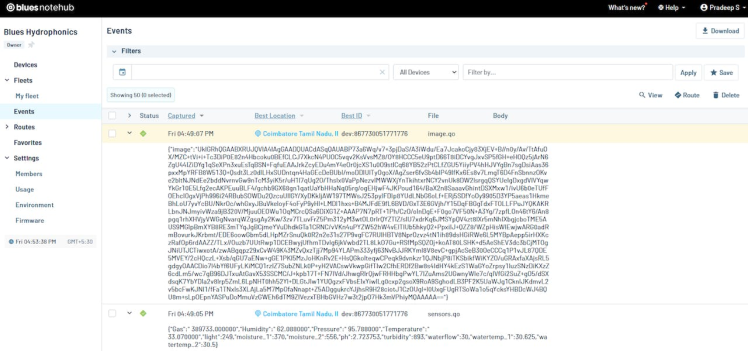

time.sleep(60)Next, open the Blues Notehub project page. You can see all our data in Notehub. The first payload is my base64 image data and the next payload is my sensor data.

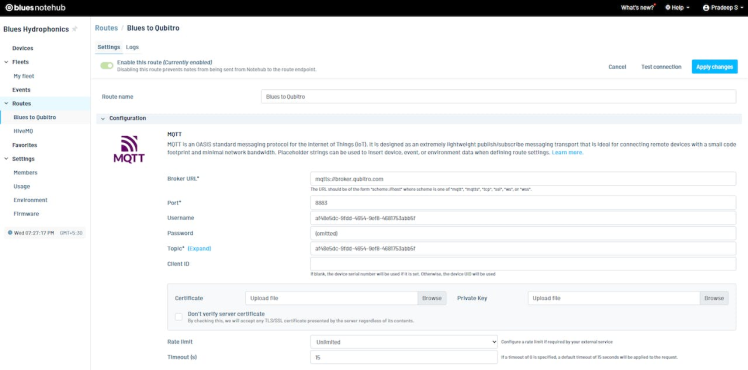

Step 6️⃣- Notehub Data Routing:

Once the data reaches Notehub we are going to use Qubitro to visualize and make an alert function based on the received data.

Qubitro simplifies the IoT development process by providing tools, services, and infrastructure that enable users to connect their devices, store and visualize their data, and build robust applications without coding. Qubitro supports various IoT protocols and platforms, such as LoRaWAN, MQTT, and The Things Stack.

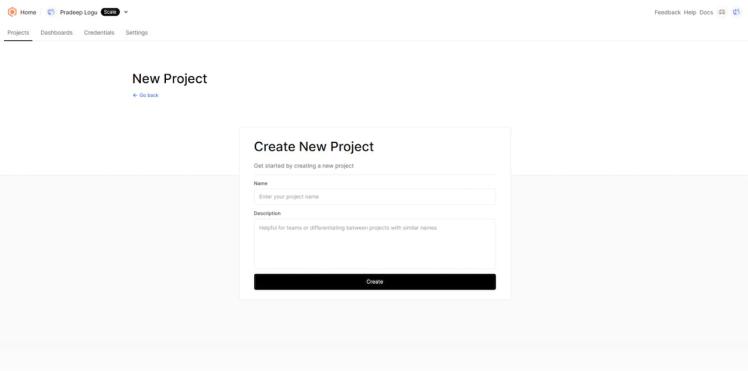

Navigate to the Qubitro Portal and create a new project.

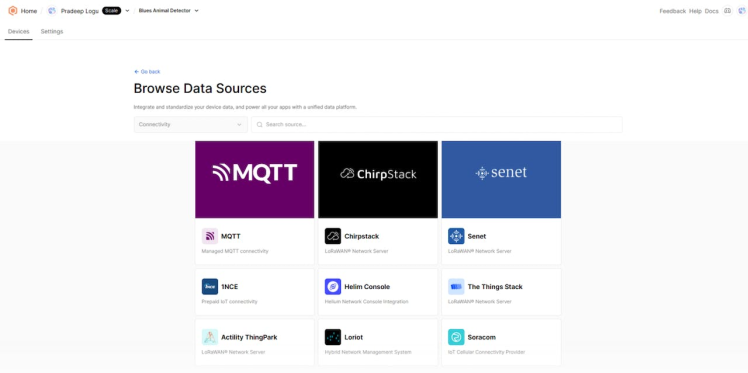

Then, select MQTT as the data source.

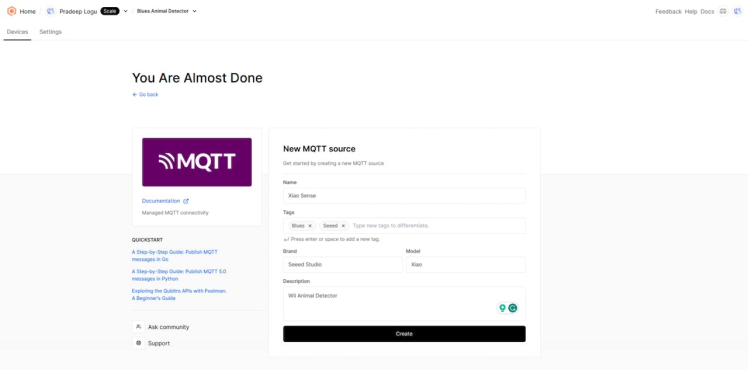

Next, enter all the input details as needed.

Then, open the created MQTT source and select the connection details. It will show you all the credentials. We need these credentials to transfer our data to Qubitro.

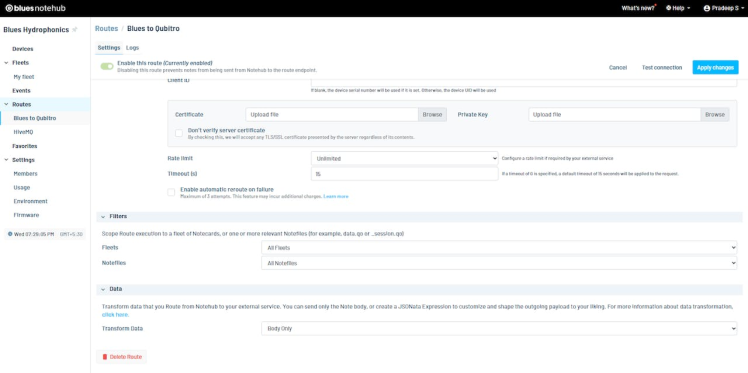

Let’s move on to the Blues Notehub’s route page. Here, we have to create a new MQTT route and change the username and password according to your Qubitro credentials.

At the bottom, define which data should move to Qubitro.

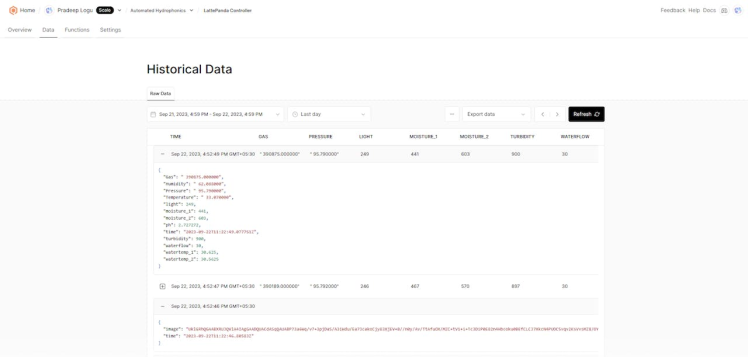

After the payload successfully transfers to Qubitro, open the Qubitro portal to monitor the incoming data.

Step 7️⃣- Qubitro Data Visualization:

To create a dashboard, navigate to the Qubitro portal click on the device that you want to use for the dashboard, and then click on the Data tab. You should see a table with the data that the device is sending, such as topic, payload, timestamp, etc.

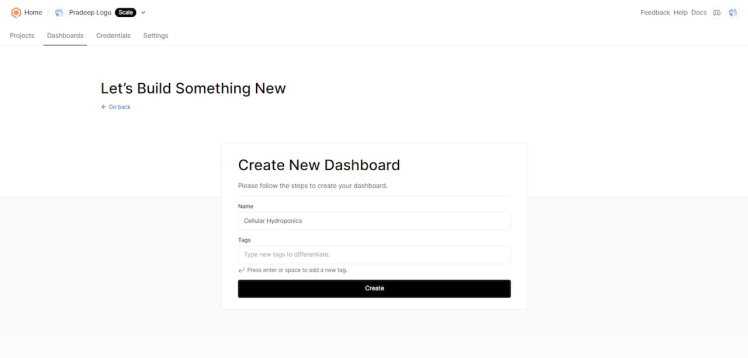

Now go to the Dashboards section and click on the Create Dashboard button. You will be asked to enter a name and a description for your dashboard. For this example, we will name it "Cellular Hydroponics" and enter the description. Then click on the Create button.

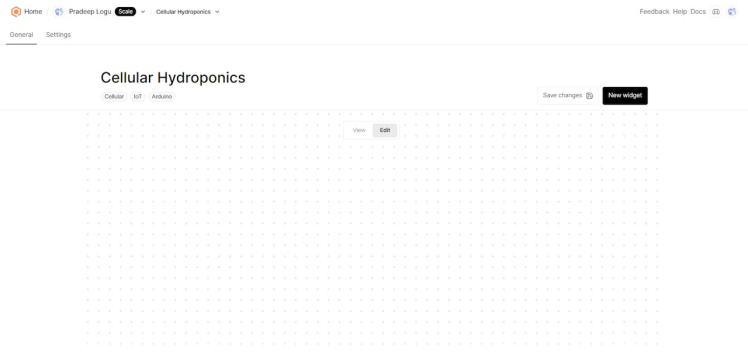

You will be taken to the dashboard editor, where you can add widgets to display your data. A widget is a graphical element that shows a specific type of data, such as a chart, a gauge, a table, etc.

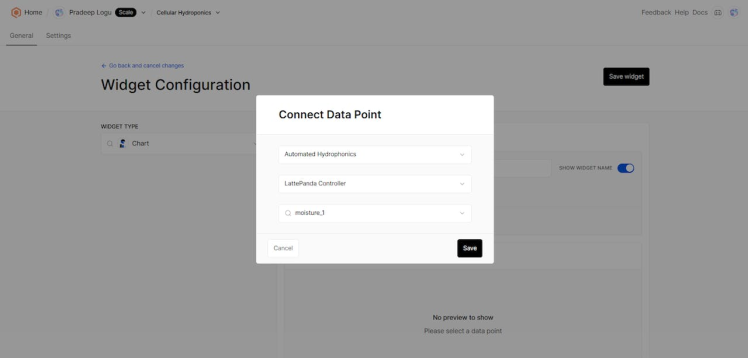

To add a widget, click on the Add Widget button and select the type of widget that you want to use.

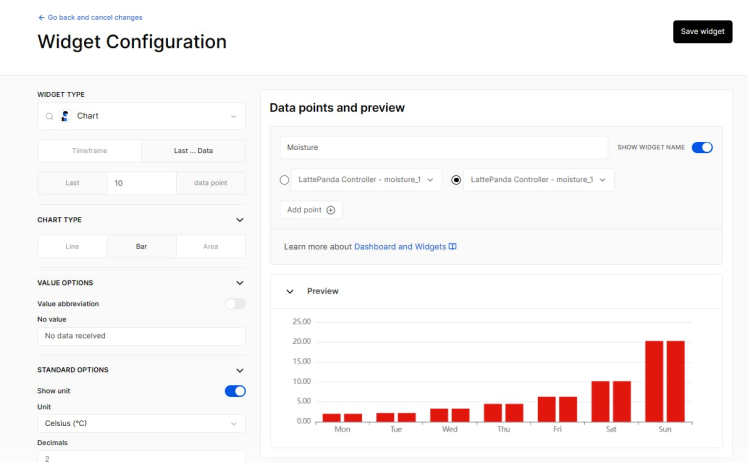

For this example, we will use two-line charts: one for Moisture 1 and one for Moisture 2.

After selecting the widget type, you will see a configuration panel where you can customize the widget settings, such as title, size, color, data source, etc. For the temperature chart, we will set the title to "Mositure1", the size to "Mositure2", and the color to "Red".

Finally, we will click on the Save button. Now, we can repeat the same process for all our data. We can drag and drop them to arrange them as we like.

The last step is to save our dashboard by clicking on the Save Dashboard button. We can also preview our dashboard by clicking on the Preview Dashboard button. We should see our dashboard with live data from our device.

Step 8️⃣- UNIHIKER Image Reconstruction:

To reconstruct the image from base64 data, we are going to use UNIHIKER with a Python script, and it will automatically show the live image payload from the Qubitro cloud. First, change the Qubitro credentials in the Python sketch below and run the script in UNIHIKER.

It will get the base64 and construct the image and it will show.

import json

import ssl

import paho.mqtt.client as paho

import paho.mqtt.subscribe as subscribe

from paho import mqtt

import base64

from unihiker import GUI

import time

import cv2

u_gui=GUI()

# callback to print a message once it arrives

def print_msg(client, userdata, message):

# print(message.payload)

j = json.loads(message.payload)

if "image" in j:

msg = j["image"]

# This is how to print the value of msg

print(msg)

img_data = base64.b64decode(msg)

img_file = open('12.jpg', 'wb')

img_file.write(img_data)

img_file.close()

img = cv2.imread('12.jpg', cv2.IMREAD_UNCHANGED)

scale_percent = 60 # percent of original size

width = int(img.shape[1] * scale_percent / 100)

height = int(img.shape[0] * scale_percent / 100)

dim = (width, height)

# resize image

resized = cv2.resize(img, dim)

cv2.imwrite("new1.jpg",resized)

u_gui.draw_image(image="new1.jpg",x=0,y=30)

# use TLS for secure connection with HiveMQ Cloud

sslSettings = ssl.SSLContext(mqtt.client.ssl.PROTOCOL_TLS)

# put in your cluster credentials and hostname

auth = {'username': "", 'password': ""}

subscribe.callback(print_msg, "#", hostname="broker.qubitro.com", port=8883, auth=auth,tls=sslSettings, protocol=paho.MQTTv31)

Wrap-up?:

This project aimed to design and implement a smart hydroponics system that uses cellular IoT technology to monitor and control the growth of plants. The system consisted of a microcontroller, sensors, a cellular module, and a cloud platform. The system was able to measure the temperature, humidity, pH, and water level of the hydroponic solution. The system also provided real-time data visualization and remote control via a web application.

The project demonstrated the feasibility and benefits of using cellular IoT for smart agriculture applications. The project also identified some challenges and limitations of the current technology, such as power consumption, network coverage, and security issues. It suggested some improvements and future work, such as integrating solar panels, adding more sensors and actuators, and implementing encryption and authentication mechanisms.

Leave your feedback...